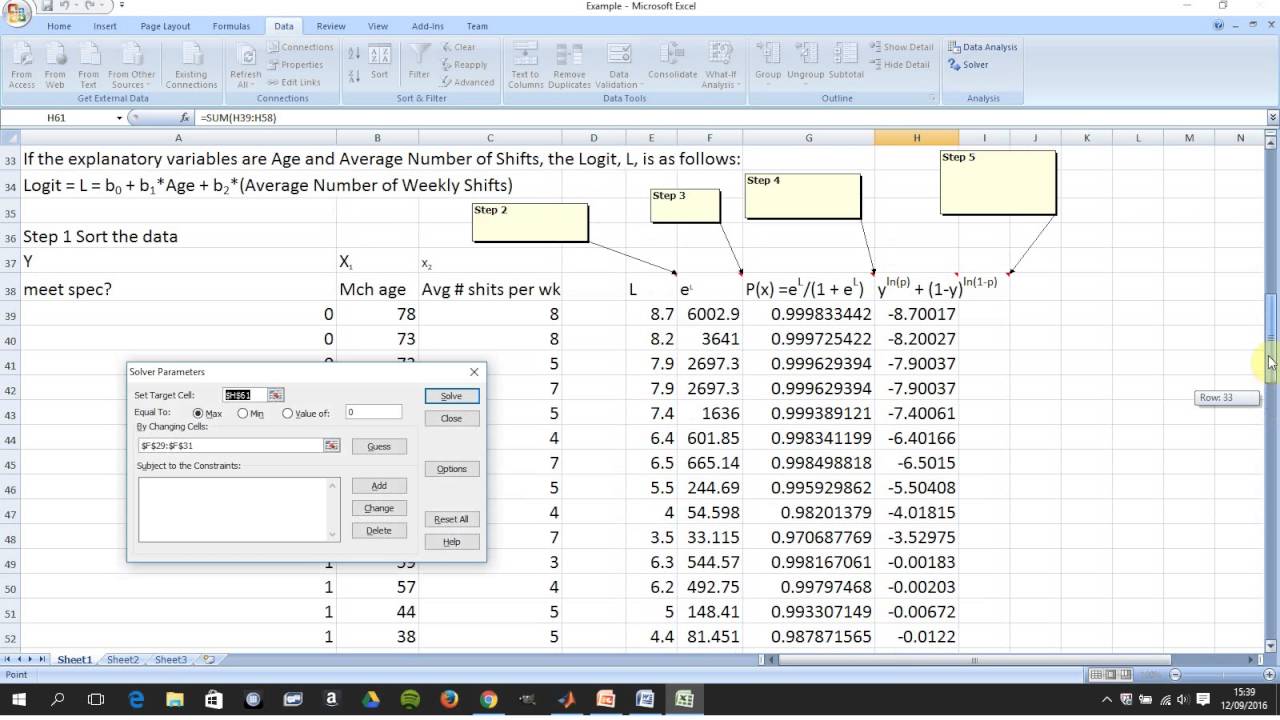

Lets restate the log likelihood function once more:Īnd lets remember that in our case Pi is modeled as: It is a little tedious but necessary to work out these derivatives analytically so we can feed them into our spreadsheet. In the notation from above F is the collection of our log likelihood function’s derivatives with respect to each beta and J is the Hessian matrix of second order partial derivatives of the likelihood function with respect to each beta. We will do this by using Newton’s numerical method. Here x is a vector of x values and J is the Jacobian matrix of first derivatives evaluated at vector xc and F is the value of the function evaluated at using latest estimates of x.Ĭoming back to our logistic regression problem what we are trying to do is to figure out a combination of beta parameters for which our log likelihood function is at a maximum which is equivalent to deriving the set of coefficients where the first derivatives of the likelihood function are all equal to zero. Same principles apply to a multivariate system of equations: This means that in each step of the algorithm we can improve on an initial guess for x using above rule. Where Xc is the current estimate of the root.

LOGIT REGRESSION EXCEL SERIES

It is a numerical method for finding a root of a function by successively making better approximations to the root based on the functions gradient (first derivative).Īs a quick example we know that we can approximate a function using first order Taylor series approximation.

Newton’s method is easiest to understand in a univariate set up.

Fortunately for us the log likelihood function has nice properties that allows us to use Newton’s method to achieve this. This cannot be done analytically unfortunately so we must resolve to using a numerical method. Therefore our approach is to take the derivative of the log likelihood function with respect to each beta and derive the value of betas for which the first derivative is equal to zero. It is much easier to work with the log of the likelihood function.įrom calculus we know that a functions maximum is at a point where its first derivative is equal to zero. The aim of the maximum likelihood method is to derive the coefficients of the model that maximize the likelihood function. We can see that when Y =1 then we have P and when Y=0 we have (1-P) The likelihood that we observe our existing sample under the assumption of independence is simply the product of the probability of each observation.

Under the assumption that each observation of the dependent variable is random and independent we can derive a likelihood function. In this set up using ordinary least squares to estimate the beta coefficients is impossible so we must rely on maximum likelihood method. In a logistic regression model we set up the equation below: The first derivative of the logistic function, which we will need when deriving the coefficients of our model, with respect to z is: We define a logistic cumulative density function as:Īnother property of the logistic function is that: We use a logistic equation to assign a probability to an event.

Now when I bring this into Excel, I bring in the coefficients, select certain values to add together, say FlowMonth2 = "Aug", Orders_Apt = "n", GeoUnits = "5", HomeOwner = "y", Platform = "Desktop", CreditScore = "800 - 900".A logit model is a type of a binary choice model. I have fitted an ordered logistic regression in R using the polr function, but I am having some trouble bringing the model coefficients into Excel and getting the probabilities there.įor explanatory variables FlowMonth2, Orders_Apt, GeoUnits, HomeOwner, Platform, CreditScore, my coefficients for the model are as follows: Value Std.